Multiple DataSources In Spring Batch

Here I am going to show you how to use multiple datasources in spring batch application. You may need to use multiple data sources for various reasons, for example, you may need to store table metadata for spring batch into h2 database and other business data into Oracle, MySQL or any other database.

Or another situation may occur where in production environment you do not have permission to create meta data tables for spring batch then you can use h2 database for storing meta data related information.

Prerequisites

Java 19, Spring Boot 3.1.2, Maven 3.8.5, MySQL 8.0.31

Project Setup

You can create a maven based project in your favorite IDE or tool. You can use the following pom.xml file for your project.

In the following build file notice I have used two different database dependencies – H2 and MySQL. The H2 in-memory database will be used for storing spring batch meta data tables and MySQL database will be used for storing actual business data.

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.roytuts</groupId>

<artifactId>spring-batch-multiple-datasources</artifactId>

<version>0.0.1-SNAPSHOT</version>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<maven.compiler.source>19</maven.compiler.source>

<maven.compiler.target>19</maven.compiler.target>

</properties>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>3.1.2</version>

</parent>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-batch</artifactId>

</dependency>

<dependency>

<groupId>com.h2database</groupId>

<artifactId>h2</artifactId>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>8.0.31</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

</plugin>

</plugins>

</build>

</project>

MySQL Database Config

I am using only MySQL database configurations in src/main/resources/application.properties file and you don’t need to configure the H2 database as it is in-memory database, so it will be available as long as the application is live.

spring.datasource.driverClassName=com.mysql.cj.jdbc.Driver

spring.datasource.url=jdbc:mysql://localhost/roytuts

spring.datasource.username=root

spring.datasource.password=root

spring.main.allow-bean-definition-overriding=trueThe spring.main.allow-bean-definition-overriding=true override the implementations of spring beans when you want to override the definition or implementation of any spring bean.

MySQL Table

The following MySQL table stores the person details from CSV file which is used in the spring batch example.

CREATE DATABASE IF NOT EXISTS `roytuts`;

USE `roytuts`;

CREATE TABLE IF NOT EXISTS `persons` (

`id` int unsigned NOT NULL AUTO_INCREMENT,

`name` varchar(50) CHARACTER SET utf8mb4 COLLATE utf8mb4_unicode_ci NOT NULL,

`email` varchar(150) CHARACTER SET utf8mb4 COLLATE utf8mb4_unicode_ci NOT NULL,

PRIMARY KEY (`id`)

) ENGINE=InnoDB AUTO_INCREMENT=8 DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_unicode_ci;DataSource Config

The following configuration class defines the data sources for H2 database and MySQL database. It also defines the transaction managers for both databases. Whenever you are working with multiple data sources or transaction managers you need to make one of them as primary.

@Configuration

public class DataSourceConfig {

@Autowired

private Environment environment;

@Primary

@Bean(name = "h2DataSource")

public DataSource h2DataSource() {

EmbeddedDatabaseBuilder embeddedDatabaseBuilder = new EmbeddedDatabaseBuilder();

return embeddedDatabaseBuilder.addScript("classpath:org/springframework/batch/core/schema-drop-h2.sql")

.addScript("classpath:org/springframework/batch/core/schema-h2.sql").setType(EmbeddedDatabaseType.H2)

.build();

}

@Bean(name = "mySQLDataSource")

public DataSource mySQLDataSource() {

return DataSourceBuilder.create().driverClassName(environment.getProperty("spring.datasource.driverClassName"))

.url(environment.getProperty("spring.datasource.url"))

.username(environment.getProperty("spring.datasource.username"))

.password(environment.getProperty("spring.datasource.password")).build();

}

@Bean

public PlatformTransactionManager mySQLDataSourceTransactionManager() {

return new DataSourceTransactionManager(mySQLDataSource());

}

@Bean

public NamedParameterJdbcTemplate namedParameterJdbcTemplate() {

return new NamedParameterJdbcTemplate(mySQLDataSource());

}

@Primary

@Bean(name = "platformTransactionManager")

public PlatformTransactionManager platformTransactionManager() {

return new DataSourceTransactionManager(h2DataSource());

}

}FieldSet Mapper

The field set mapper is used to set the value to the appropriate object after reading from the input source.

public class UserFieldSetMapper implements FieldSetMapper<User> {

@Override

public User mapFieldSet(FieldSet fieldSet) throws BindException {

User user = new User();

user.setName(fieldSet.readString(0));

user.setEmail(fieldSet.readString(1));

return user;

}

}Item Processor

Item processor basically processes each item to be transformed into something else before being written to the destination.

public class UserItemProcessor implements ItemProcessor<User, User> {

@Override

public User process(final User user) throws Exception {

final String domain = "roytuts.com";

final String name = user.getName().toUpperCase();

final String email = user.getName() + "@" + domain;

final User transformedUser = new User(name, email);

System.out.println("Converting [" + user + "] => [" + transformedUser + "]");

return transformedUser;

}

}Prepared Statement

This is required to write the value of each item to the destination and here I am going to write each item to the database table.

public class PersonsPreparedStatementSetter implements ItemPreparedStatementSetter<User> {

@Override

public void setValues(User item, PreparedStatement ps) throws SQLException {

ps.setString(1, item.getName());

ps.setString(2, item.getEmail());

}

}Spring Batch Config

Spring batch configuration configures JobRepository, Job, ItemReader, ItemProcessor, ItemWriter for the spring batch processing mechanism.

You don’t need to use @EnableBatchProcessing in spring batch 5 version. The @Configuration annotation is enough for configuring the batch things.

@Configuration

public class SpringBatchConfig {

@Autowired

@Qualifier("h2DataSource")

private DataSource dataSource;

@Autowired

private NamedParameterJdbcTemplate namedParameterJdbcTemplate;

@Autowired

private PlatformTransactionManager platformTransactionManager;

private static final String QUERY_INSERT_PERSONS = "INSERT " + "INTO persons(name, email) " + "VALUES (?, ?)";

@Bean

public JobRepository jobRepository() throws Exception {

JobRepositoryFactoryBean factory = new JobRepositoryFactoryBean();

factory.setDataSource(dataSource);

factory.setTransactionManager(platformTransactionManager);

factory.afterPropertiesSet();

return factory.getObject();

}

@Bean

// creates an item reader

public ItemReader<User> reader() {

FlatFileItemReader<User> reader = new FlatFileItemReader<User>();

// look for file user.csv

reader.setResource(new ClassPathResource("person.csv"));

// line mapper

DefaultLineMapper<User> lineMapper = new DefaultLineMapper<User>();

// each line with comma separated

lineMapper.setLineTokenizer(new DelimitedLineTokenizer());

// map file's field with object

lineMapper.setFieldSetMapper(new UserFieldSetMapper());

reader.setLineMapper(lineMapper);

return reader;

}

@Bean

// creates an instance of our UserItemProcessor for transformation

public ItemProcessor<User, User> processor() {

return new UserItemProcessor();

}

@Bean

@Transactional(rollbackFor = Exception.class)

// creates item writer

public ItemWriter<User> writer() {

JdbcBatchItemWriter<User> batchItemWriter = new JdbcBatchItemWriter<>();

batchItemWriter.setJdbcTemplate(namedParameterJdbcTemplate);

batchItemWriter.setSql(QUERY_INSERT_PERSONS);

ItemPreparedStatementSetter<User> valueSetter = new PersonsPreparedStatementSetter();

batchItemWriter.setItemPreparedStatementSetter(valueSetter);

return batchItemWriter;

}

@Bean

public Job importUserJob(Step step) throws Exception {

// need incrementer to maintain execution state

return new JobBuilder("importUserJob", jobRepository()).incrementer(new RunIdIncrementer()).flow(step).end()

.build();

}

@Bean

public Step step1(ItemReader<User> reader, ItemWriter<User> writer, ItemProcessor<User, User> processor)

throws Exception {

// chunk uses how much data to write at a time

// In this case, it writes up to five records at a time.

// Next, we configure the reader, processor, and writer

return new StepBuilder("step1", jobRepository()).<User, User>chunk(5, platformTransactionManager).reader(reader)

.processor(processor).writer(writer).build();

}

}Input File

The input file (person.csv) is a CSV (Comma Separated Value) file which has simply first name and last name pairs. This file is kept under class path folder src/main/resources.

soumitra,roy

souvik,sanyal

arup,chatterjee

suman,mukherjee

debina,guha

liton,sarkar

debabrata,poddarVO Class

The Value Object class is a simple class which has two attributes for first and last names.

public class User {

private String name;

private String email;

public User() {

}

public User(String name, String email) {

this.name = name;

this.email = email;

}

public String getName() {

return name;

}

public void setName(String name) {

this.name = name;

}

public String getEmail() {

return email;

}

public void setEmail(String email) {

this.email = email;

}

@Override

public String toString() {

return "name: " + name + ", email:" + email;

}

}Spring Boot Main Class

A class is having main method and @SpringBootApplication annotation is enough to start the spring boot application.

@SpringBootApplication

public class SpringBatch {

public static void main(String[] args) {

SpringApplication.run(SpringBatch.class, args);

}

}Testing Multiple Data Sources In Spring Batch

Here is the output of the spring batch application when run by executing the main class.

Converting [name: soumitra, email:roy] => [name: SOUMITRA, email:soumitra@roytuts.com]

Converting [name: souvik, email:sanyal] => [name: SOUVIK, email:souvik@roytuts.com]

Converting [name: arup, email:chatterjee] => [name: ARUP, email:arup@roytuts.com]

Converting [name: suman, email:mukherjee] => [name: SUMAN, email:suman@roytuts.com]

Converting [name: debina, email:guha] => [name: DEBINA, email:debina@roytuts.com]

Converting [name: liton, email:sarkar] => [name: LITON, email:liton@roytuts.com]

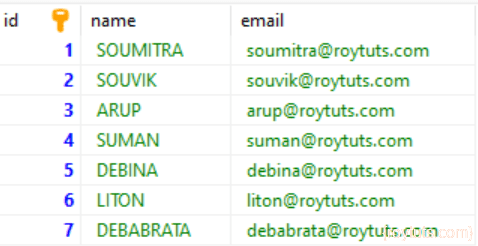

Converting [name: debabrata, email:poddar] => [name: DEBABRATA, email:debabrata@roytuts.com]Data in the MySQL database table gets inserted as:

And the insert statements when exported be like the following:

INSERT INTO `persons` (`id`, `name`, `email`) VALUES

(1, 'SOUMITRA', 'soumitra@roytuts.com'),

(2, 'SOUVIK', 'souvik@roytuts.com'),

(3, 'ARUP', 'arup@roytuts.com'),

(4, 'SUMAN', 'suman@roytuts.com'),

(5, 'DEBINA', 'debina@roytuts.com'),

(6, 'LITON', 'liton@roytuts.com'),

(7, 'DEBABRATA', 'debabrata@roytuts.com');Hope you got an idea how to use multiple datasources in spring batch application.